Claude Mythos is an AI that's SO good at hacking, its creators have been forced to lock it away

Financiers and politicians are concerned about the potential impact on global banking systems

Don't Miss

Most Read

Claude Mythos is a new Artificial Intelligence (AI) model that's so powerful, its creators at Anthropic decided to withhold it from the public over concerns about its superhuman hacking capabilities.

This ChatGPT competitor is purportedly able to identify flaws in IT systems and then craft code to exploit them, taking control or bringing down critical software and infrastructure. Anthropic claims Mythos has already found major flaws in every major browser and operating system, which could disrupt the world’s most important software.

What makes Claude Mythos so troubling is its ability to do this at scale, removing the man-power or technical acumen usually required to execute a sophisticated cyberattack. That could further lower the barrier to entry for attackers and increase the speed and precision of advanced threats.

An early example of its capabilities, Mythos discovered a flaw in a critical piece of security infrastructure built into the Linux kernel that had gone unnoticed by human security experts for the last 27 years – a weak point that could threaten a swathe of the services that you rely on every day online, including streaming services and online banking.

Instead of releasing it to the public, Anthropic is first offering Mythos to companies that run much of our critical infrastructure, including Apple, Microsoft and Google

|REUTERS

Almost everything we depend on in the physical world – airports, hospitals, public transport networks — relies on software. Cyberattacks have crippled NHS hospitals, forced major transport hubs to close, and crippled power grids. Until now, such large-scale attacks demanded significant expertise and resources of a government. But Mythos could hand these capabilities to amateurs while simultaneously turbocharging professionals’ destructive power.

Unsurprisingly, the leashed AI model from Anthropic dominated discussions at the International Monetary Fund (IMF) meeting in Washington DC earlier this week. Speaking to the BBC, Canadian Finance Minister François-Philippe Champagne revealed: "It is serious enough to warrant the attention of all the finance ministers.

"The difference is that the Strait of Hormuz - we know where it is and we know how large it is... the issue that we're facing with Anthropic is that it's the unknown, unknown. This is requiring a lot of attention so that we have safeguards, and we have process in place to make sure that we ensure the resiliency of our financial systems."

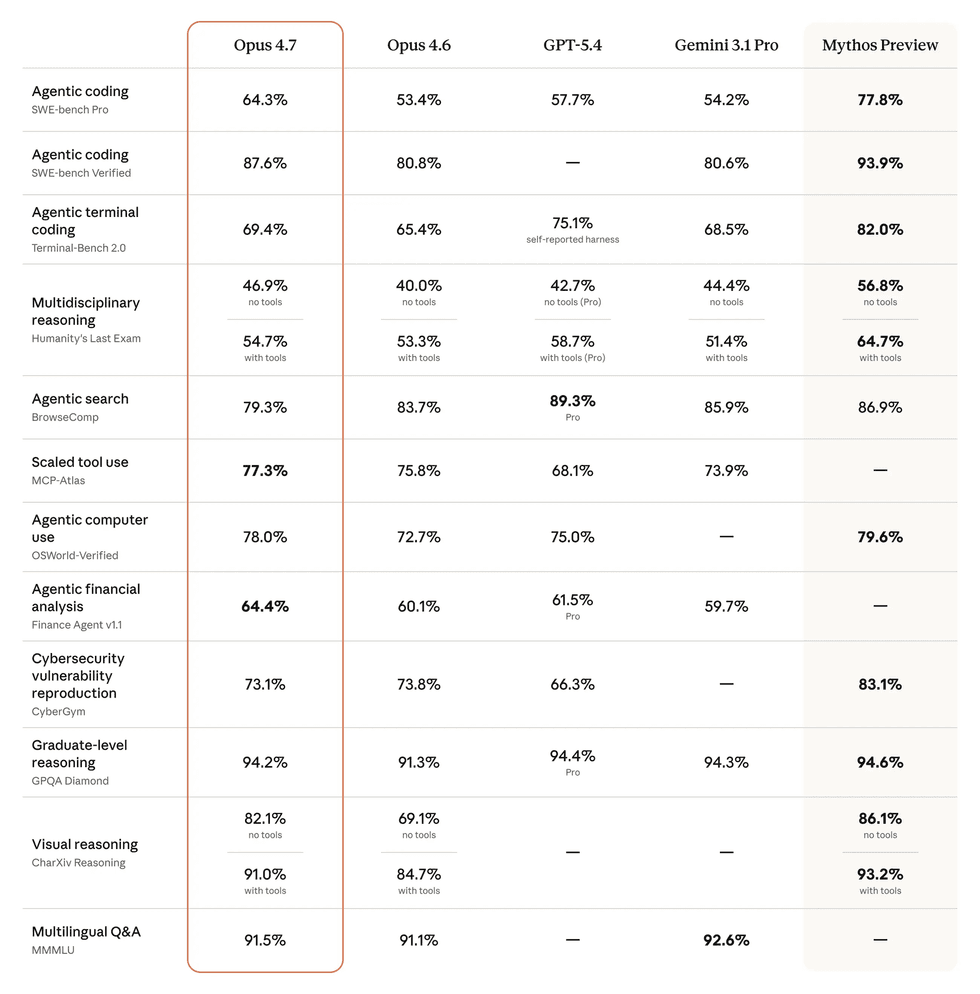

While Mythos is still under lock-and-key, the latest AI model from Anthropic's Claude agent – dubbed Claude Opus 4.7 – started to roll-out earlier this week. In a chart shared on its blog, Anthropic compared its capabilities against other publicly-available models ...and its own Mythos

|ANTHROPIC PRESS OFFICE

Instead, Anthropic has started to roll out Claude Opus 4.7 to the public, an updated AI model without the same risks as Mythos. Whispers at the IMF meeting suggest that another prominent AI company in the United States is planning to release a new model with the same powers as Mythos without the same safeguards.

Anthropic hasn't shelved its controversial AI model.

It launched Project Glasswing, which aims to channel the AI model's capabilities into defence. Behind closed doors, more than 40 companies are leveraging Mythos to identify vulnerabilities before malicious actors do. The United States government this week announced plans to make a version of Anthropic's frontier AI model, Mythos, available to major federal agencies to strengthen defences as well.

Mythos has found "thousands" of major vulnerabilities in operating systems, web browsers and other software. Its capabilities to code at a high level have given it a potentially unprecedented ability to identify cybersecurity vulnerabilities and devise ways to exploit them, experts said.

LATEST DEVELOPMENTS

- Your Ring doorbell has a VERY surprising new rival in Sky TV

- British brand launches long-awaited Sky Q rival to watch, pause, and record telly

- Best VPN deals

- Most expensive Kindle EVER launches in UK today

- Popular messaging app discontinued on Galaxy phones

- Shark launches £130 handheld fan that promises to 'instantly cool' skin

- Amazon's cheapest Fire TV Stick relaunches with ultra-slim redesign and Alexa+

Gregory Barbaccia, federal chief information officer at the White House Office of Management and Budget, told Cabinet department officials in an email on Tuesday that the OMB was setting up protections to allow their agencies to begin using Mythos, according to Bloomberg News.

"We're working closely with model providers, other industry partners, and the intelligence community to ensure the appropriate guardrails and safeguards are in place before potentially releasing a modified version of the model to agencies," Barbaccia said in the email, which had "Mythos Model Access" as the subject, the report said.

Barbaccia's email does not definitively say that various agencies would get Mythos access, nor does it provide a timeline for when it might come or how they might use it, an exclusive report from Bloomberg revealed.

An official said the White House was working with frontier AI labs to ensure their models help secure critical software vulnerabilities, adding that any new technology requires a technical period of evaluation for fidelity and security.

If you're not familiar, Anthropic was founded by Dario Amodie and a former group of AI researchers in 2021. Its flagship AI, Claude, is trained using what the company calls “constitutional AI,” a method meant to embed rules and guardrails directly into the system.

Most AI systems learn by being corrected over and over again by humans. A person looks at an answer and could say “this is good” or “this is bad,” and the system adjusts. Anthropic employs some of this, but it also adds a built-in rule book with the following guidelines:

- Don’t produce harmful or misleading content

- Try to be honest and clear

- Avoid encouraging dangerous behaviour

Anthropic has also attracted tens of billions of dollars in the US from some of the most influential players in tech, including Amazon and Google. In terms of its relationship with the US government, the company has taken a firm stance against allowing its technology to be used for certain military and surveillance applications, a decision that has complicated its access to defence contracts.

At one point, this caution led to it being viewed as a potential supply chain risk by the US Department of Defence. However, Anthropic is still actively exploring ways to work with government agencies, attempting to balance its safety commitments with the realities of operating in a sector increasingly tied to national security.

While full details about Mythos haven’t been publicly released yet, it’s believed to be significantly more advanced than current AI systems, especially when it comes to analysing complex data and identifying patterns.

A protester holds up a placard that reads "ASI will kill us". This initialism refers to the idea of an Artificial Superintelligence that can outperforms humans in every cognitive field, including creativity, general wisdom, and social skills

|REUTERS

Officials have been in contact with major banks, urging them to “stress-test” their systems—essentially, to simulate attacks and check for vulnerabilities—before Mythos is made publicly available.

The AI space is largely shaped by two other major entities — ChatGPT and Deepseek.

OpenAI was founded by a group of prominent Silicon Valley figures, including Sam Altman, Elon Musk, Greg Brockman, Ilya Sutskever, John Schulman and others in 2015.

ChatGPT has become the most widely adopted, known for its versatility and broad reach.

In contrast, Chinese-based DeepSeek is much newer. It was founded by Chinese entrepreneur Liang Wenfeng, and is backed by the quantitative trading firm High-Flyer. The company has quickly gained attention for releasing high-performance models at relatively low cost.