Malicious robots are secretly joining your Microsoft Teams meetings – how to block them NOW

Microsoft is rolling out a handy new feature to block these 'bots

- Third-party robots have been accessing Microsoft Teams calls

- Many are malicious and can record your private conversations

- Microsoft is rolling out a new feature in May to help block them

- There are preventative steps you can take now as a precaution

Don't Miss

Most Read

Latest

You might want to double-check your colleague's notetaking assistant before you let them into your next virtual meeting. Third-party bots have been unknowingly sitting in on Microsoft Teams calls.

Many of these 'bots are designed as helpful tools, often functioning as Artificial Intelligence (AI) assistants that take notes during video calls or summarise the discussion for review afterwards. You might've even noticed a few of these cropping up across your Teams calls in recent months.

However, fraudsters have become increasingly aware of this common practice, and as a result, malicious bots have begun slipping into calls disguised as legitimate assistants, sometimes mistaken for a colleague’s notetaker. If successful, they can cause quite a bit of harm — recording your confidential discussions, taking screenshots, or even attempting to have malware installed on your devices.

To prevent this from continuing, Microsoft is actively working on an update that will help you better screen who, or what, is joining your calls. When a third-party bot attempts to join your meeting, the organiser will need to grant specific permission to be let in.

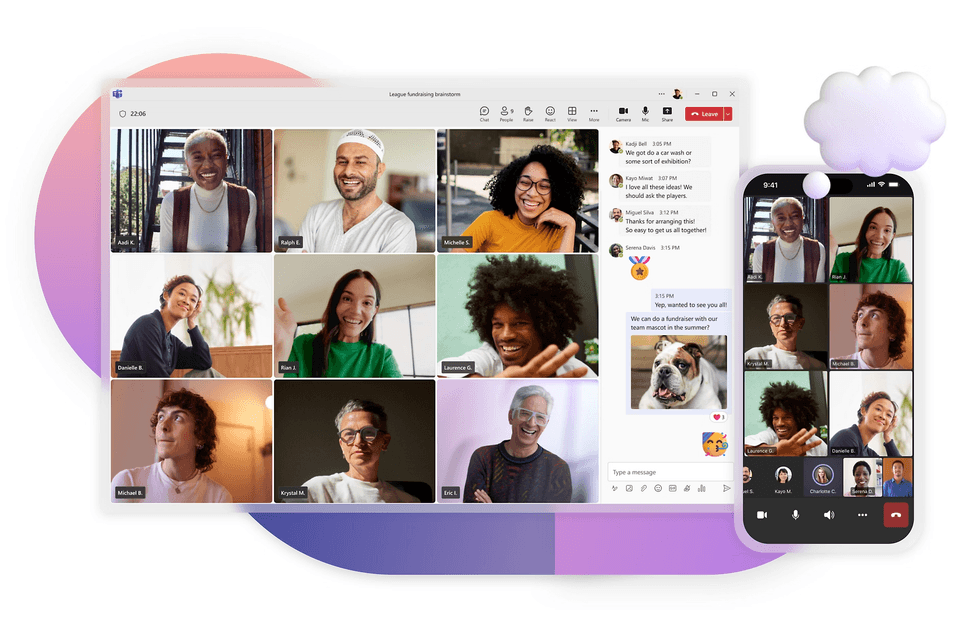

Microsoft Teams was created to let you chat and have a call with others, and has become popular to use in a work setting

|UNSPLASH

Commentary from Microsoft 365 Roadmap explains, "During Teams meetings, if there is an external 3P bot trying to join the meeting, organisers will be able to see a clear representation of the bots while they wait in the lobby.

"Organisers will be required to explicitly and separately admit these bots into the meeting, if really required. This approach will ensure that no one inadvertently accepts the external bots into the meeting ensuring that the organisers have full control over the presence of these bots."

This new feature essentially gives you more information so you can make a more informed decision on who you're authorising into the meeting room. The expected rollout for this new feature won't begin until May this year. It'll be available on Teams for Windows, macOS, Android, iOS, and Linux devices.

However, if you want to protect your meetings from bots beforehand, there are a few steps you can take now.

LATEST DEVELOPMENTS

1. Require approval before anyone joins

After you've scheduled your meeting, you can set up permissions where you'll need to manually approve everyone who requests to join during the allotted time. This will create a "lobby" otherwise known as a waiting room for both people and bots.

- Open the meeting invite

- Click Meeting Options

- Under “Who can bypass the lobby?”, select: Only me or People in my organisation

- Save the settings

If you do let in a suspicious bot by accident, you can remove it immediately by following these steps:

- Open Participants

- Click (....) next to their name

- Select Remove from meeting

There are several steps you can take to screen who's joining your meetings ahead of the new Microsoft feature update

|MICROSOFT PRESS OFFICE

2. Turn off anonymous joining

Malicious bots can often slip into the meeting through the use of anonymous links that have been sent around by those participating. To turn this ability off:

- Navigate to Meeting Options

- Find "Allow anonymous users to join a meeting"

- Set it to "Off"

3. Lock the Teams meeting after it starts

Once everyone has joined the meeting, you can lock it so no one can join after it starts. This way, no one will need to monitor participants while the call is underway. You can set this up by taking these steps:

- Click Participants in the meeting toolbar

- Select More options

- Click Lock the Meeting

More From GB News